共计 6954 个字符,预计需要花费 18 分钟才能阅读完成。

今天添加索引时发现 kibana 添加索引不生效, 页面也没有报错,没有创建成功只是一闪而过。

另外发现各项目日志与当前时间差异很大,filebeat 一直报错 io timeout

具体报错如下:

filebeat 无法给 logstash 传输数。

ip 使用 x 代替

logstash/async.go:235 Failed to publish events caused by: read tcp 172.17.x.x:39092->172.17.x.x:5044: i/o timeoutlogstash 报错如下,logstash 无法给 es 传输数据,es 一直在拒绝所有的请求

[INFO][logstash.outputs.elasticsearch] Retrying individual bulk actions that failed or were rejected by the previous bulk request. {:count=>1}

[INFO][logstash.outputs.elasticsearch] retrying failed action with response code: 403 ({"type"=>"cluster_block_exception", "reason"=>"blocked by: [FORBIDDEN/12/index read-only / allow delete (api)];"})报错索引只读

index read-only / allow delete (api)];"}es 报错,es 报错也是索引只读错误

[2018-08-23T17:30:35,546][WARN][o.e.x.m.e.l.LocalExporter] unexpected error while indexing monitoring document

org.elasticsearch.xpack.monitoring.exporter.ExportException: ClusterBlockException[blocked by: [FORBIDDEN/12/index read-only / allow delete (api)];]

at org.elasticsearch.xpack.monitoring.exporter.local.LocalBulk.lambda$throwExportException$2(LocalBulk.java:134) ~[?:?]

at java.util.stream.ReferencePipeline$3$1.accept(ReferencePipeline.java:193) ~[?:1.8.0_144]

at java.util.stream.ReferencePipeline$2$1.accept(ReferencePipeline.java:175) ~[?:1.8.0_144]

at java.util.Spliterators$ArraySpliterator.forEachRemaining(Spliterators.java:948) ~[?:1.8.0_144]

at java.util.stream.AbstractPipeline.copyInto(AbstractPipeline.java:481) ~[?:1.8.0_144]

at java.util.stream.AbstractPipeline.wrapAndCopyInto(AbstractPipeline.java:471) ~[?:1.8.0_144]

at java.util.stream.ForEachOps$ForEachOp.evaluateSequential(ForEachOps.java:151) ~[?:1.8.0_144]

at java.util.stream.ForEachOps$ForEachOp$OfRef.evaluateSequential(ForEachOps.java:174) ~[?:1.8.0_144]

at java.util.stream.AbstractPipeline.evaluate(AbstractPipeline.java:234) ~[?:1.8.0_144]

at java.util.stream.ReferencePipeline.forEach(ReferencePipeline.java:418) ~[?:1.8.0_144]

at org.elasticsearch.xpack.monitoring.exporter.local.LocalBulk.throwExportException(LocalBulk.java:135) ~[?:?]

at org.elasticsearch.xpack.monitoring.exporter.local.LocalBulk.lambda$doFlush$0(LocalBulk.java:118) ~[?:?]

at org.elasticsearch.action.ActionListener$1.onResponse(ActionListener.java:59) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.ContextPreservingActionListener.onResponse(ContextPreservingActionListener.java:43) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.TransportAction$1.onResponse(TransportAction.java:85) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.TransportAction$1.onResponse(TransportAction.java:81) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.bulk.TransportBulkAction$BulkRequestModifier.lambda$wrapActionListenerIfNeeded$0(TransportBulkAction.java:571) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.ActionListener$1.onResponse(ActionListener.java:59) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.bulk.TransportBulkAction$BulkOperation$1.finishHim(TransportBulkAction.java:380) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.bulk.TransportBulkAction$BulkOperation$1.onFailure(TransportBulkAction.java:375) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.TransportAction$1.onFailure(TransportAction.java:91) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.replication.TransportReplicationAction$ReroutePhase.finishAsFailed(TransportReplicationAction.java:908) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.replication.TransportReplicationAction$ReroutePhase.handleBlockException(TransportReplicationAction.java:826) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.replication.TransportReplicationAction$ReroutePhase.handleBlockExceptions(TransportReplicationAction.java:814) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.replication.TransportReplicationAction$ReroutePhase.doRun(TransportReplicationAction.java:712) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.common.util.concurrent.AbstractRunnable.run(AbstractRunnable.java:37) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.replication.TransportReplicationAction.doExecute(TransportReplicationAction.java:169) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.replication.TransportReplicationAction.doExecute(TransportReplicationAction.java:97) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.TransportAction$RequestFilterChain.proceed(TransportAction.java:167) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.xpack.security.action.filter.SecurityActionFilter.apply(SecurityActionFilter.java:125) ~[?:?]

at org.elasticsearch.action.support.TransportAction$RequestFilterChain.proceed(TransportAction.java:165) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.TransportAction.execute(TransportAction.java:139) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.TransportAction.execute(TransportAction.java:81) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.bulk.TransportBulkAction$BulkOperation.doRun(TransportBulkAction.java:350) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.common.util.concurrent.AbstractRunnable.run(AbstractRunnable.java:37) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.bulk.TransportBulkAction.executeBulk(TransportBulkAction.java:462) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.bulk.TransportBulkAction.doExecute(TransportBulkAction.java:175) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.bulk.TransportBulkAction.lambda$processBulkIndexIngestRequest$4(TransportBulkAction.java:514) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.ingest.PipelineExecutionService$2.doRun(PipelineExecutionService.java:98) [elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.common.util.concurrent.ThreadContext$ContextPreservingAbstractRunnable.doRun(ThreadContext.java:638) [elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.common.util.concurrent.AbstractRunnable.run(AbstractRunnable.java:37) [elasticsearch-6.0.0.jar:6.0.0]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) [?:1.8.0_144]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) [?:1.8.0_144]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_144]

Caused by: org.elasticsearch.cluster.block.ClusterBlockException: blocked by: [FORBIDDEN/12/index read-only / allow delete (api)];

at org.elasticsearch.cluster.block.ClusterBlocks.indexBlockedException(ClusterBlocks.java:182) ~[elasticsearch-6.0.0.jar:6.0.0]

at org.elasticsearch.action.support.replication.TransportReplicationAction$ReroutePhase.handleBlockExceptions(TransportReplicationAction.java:812) ~[elasticsearch-6.0.0.jar:6.0.0]

... 20 more 解决办法 1

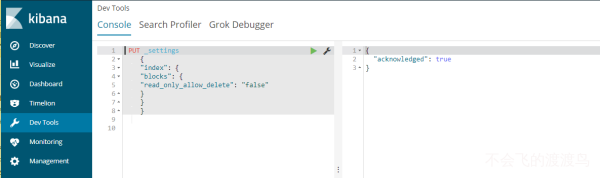

在 kibana 开发控制台执行下面语句即可

PUT _settings

{

"index": {

"blocks": {"read_only_allow_delete": "false"}

}

}解决方法 2

如果 kibana 无法执行命令,可以使用下面命令解决

curl -XPUT -H "Content-Type: application/json" http://localhost:9200/_all/_settings -d '{"index.blocks.read_only_allow_delete": null}'一旦在存储超过 95%的磁盘中的节点上分配了一个或多个分片的任何索引,该索引将被强制进入只读模式

如图所示

再次查看日志信息,问题成功解决!

正文完